The Existential Loneliness of Prompt Engineering

March 18, 2023 Cory Doctorow Generative AI James Bridle Casey Newton Evgeny Morozov Matte Blanco Marcel Duchamp Rob Horning

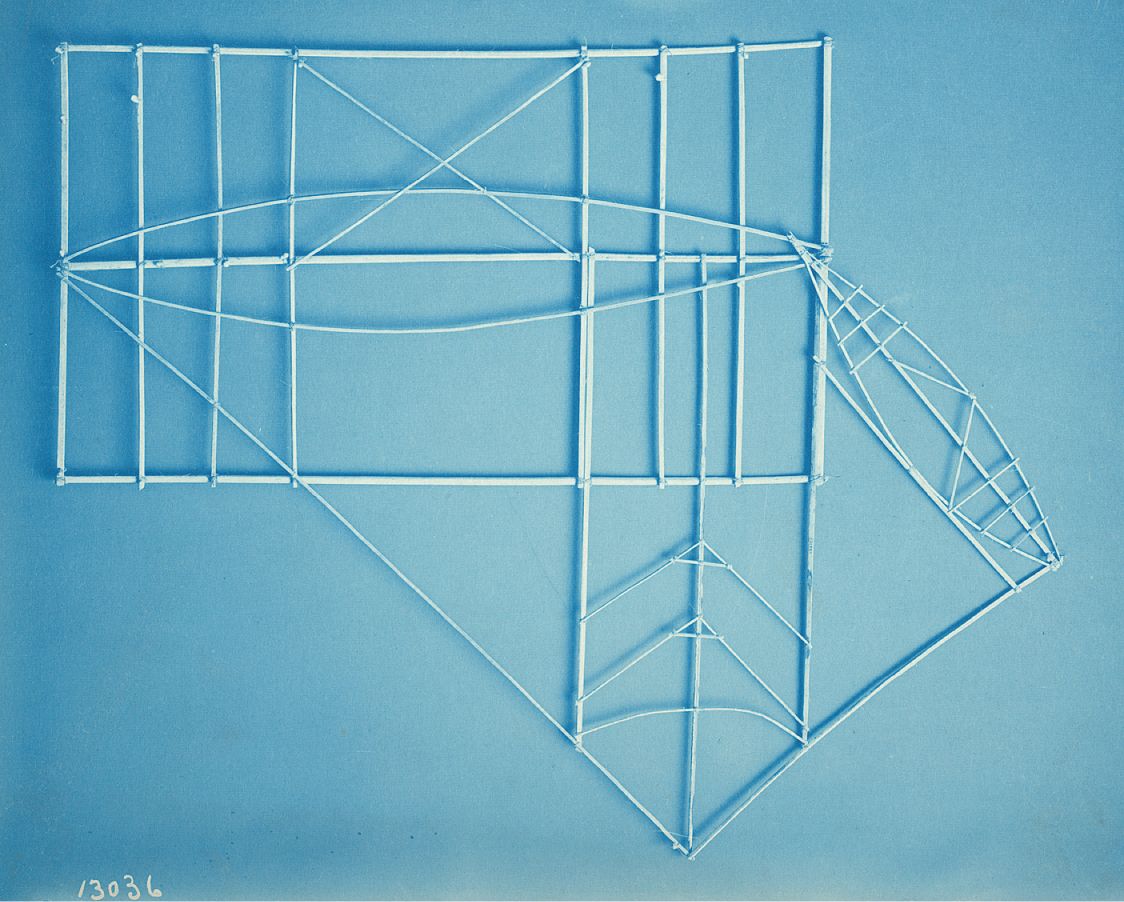

Marshall Islands Navigation Chart

Marshall Islands Navigation Chart

A while back, discussing AI and the creeping influence of that technology on our jobs, a friend remarked to my wife that they thought all creative workers could become “prompt engineers”.

I can see the allure of that idea, of history broadly repeating itself. We would become stewards of the AI, like the workers of yore started operating machines instead of manufacturing by hand. ChatGPT became such a runaway success precisely because it simplified the interface for interacting with powerful technology to something we already know: Asking questions.

The creative process becomes mere prodding

If you believe that this is the no-code future they’ve been promising, then chat interfaces move us ever-closer to that idea of a “bicycle for the mind”. Enter a good prompt and it becomes supercharged by capable AI.

In a recent piece for The Guardian, James Bridle writes about the wider cultural phenomenon at play:

The abilities of these programs to conjure up strange new worlds in words and pictures alike entranced the public, and the desire to have a go oneself produced a growing literature on the ins and outs of making the best use of these tools, and particularly how to structure inputs to get the most interesting outcomes.

I can only speculate if this kind of creativity in using the software will translate into real jobs, but for now, I find the idea relegating all creative work to a powerful technology truly… lonely: It reduces the creative process to mere prodding, and moves all the legwork to the machines. Rather than supercharging us, the idea that we’ll one day work solely by engineering prompts feels crippling: It assumes we can’t accomplish anything ourselves.1

On a recent episode of Hard Fork, the hosts discussed American lawmakers’ attempts to regulate the speech of AI software. Being as deep into the culture wars as we are, some politicians had become fearful that generative AI would become “too woke” and were envisioning a future where each answer would come from a progressive political viewpoint. I don’t remember his exact quote, but host Casey Newton quips at some point that people seem to forget that they can still write themselves. Don’t like the answer? You can write your own!

There’s more to human creativity

He gets to the heart of the issue here: AI is powerful, but for now, it is, in the words of Cory Doctorow “a solution in search of a problem”. It’s possible to generate impressive text, but so far it serves very little purpose. And even if you disagree with the text an AI chatbot puts out, you can make a better argument. Most likely, you’ll have more intelligent or novel ideas than a statistical engine that’s prone to regurgitating previous ideas.

In fact, for now AI has severe shortcomings—though its hallucinations are sometimes mistaken for creativity, it can’t create anything truly novel. In fact it lacks the intuition and human experience required for coming up with anything novel: It just repeats, endlessly, what has already been done and what is the most probable outcome of an idea.

Call me naive, but I think there’s inherently more to human creativity than this, call it a spark, call it an “aura”—but I firmly believe we are more than the “stochastic parrots” that AI engineers like equating humans with.

Update: Evgeny Morozov doubles down on this idea in an article for The Guardian, explaining how fundamentally human creativity differs from pattern matching:

Human intelligence is not one-dimensional. It rests on what the 20th-century Chilean psychoanalyst Ignacio Matte Blanco called bi-logic: a fusion of the static and timeless logic of formal reasoning and the contextual and highly dynamic logic of emotion. The former searches for differences; the latter is quick to erase them. Marcel Duchamp’s mind knew that the urinal belonged in a bathroom; his heart didn’t. Bi-logic explains how we regroup mundane things in novel and insightful ways. We all do this — not just Duchamp.

Rob Horning writes: “In general, automation typically works as deskilling, concentrating agency in the hands of the few to wield over the many. Generative AI is build on the premise of appropriating people’s past work, abstracting some governing principles from it, and using those abstractions as a template to stamp out subsequent work. As with previous forms of automation, it allows management to turn more human workers into machine minders whose creative input is not permitted, who are debarred from any leverage over the production process and reduced to another machinic input into it.”↩︎