The Flipside of Fake

July 10, 2023 Post-Truth Generative AI Photography Midjourney Boris Eldagsen Pia Riverola

This restaurant had put tables out, but there was not a single guest. You could watch the tablecloth flapping in sun, slowly forming waves.

This restaurant had put tables out, but there was not a single guest. You could watch the tablecloth flapping in sun, slowly forming waves.

Indulge me for a moment. Lately I’ve been noticing the occasional street scene that looks like an AI-generated image. That’s a clear reversal of logic: I’m aware that AI mimics the real world, not the other way around. It’s just that the aesthetics were similar—and though I was objectively looking at reality, my brain would signal that I was seeing something artificial. What was going on?

I encounter these moments on my walks through Berlin, always during the day. It’s summer here, and we’ve been experiencing weeks of bright, clear weather when the sun floods the streets with light. Not only does that make the colors pop, it also illuminates the shadows for a strangely hyperrealistic look. And then, every once in a while, the city’s chaotic layers would overlap to form a scene that looked to my brain as if it had been assembled by an algorithm rather than by chance.

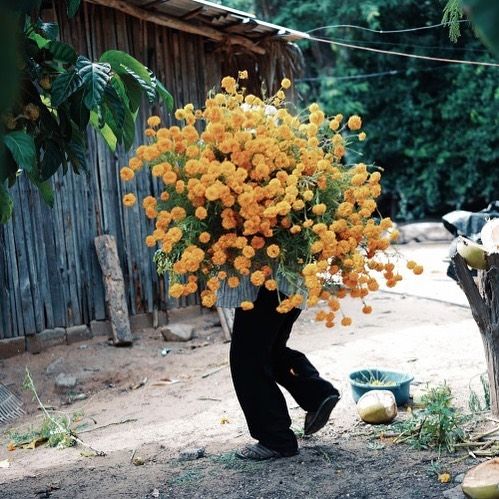

This (real) photo by artist Pia Riverola shows a huge bushel of flowers, one of the telltale signs of an AI-generated image—and immediately looks fake to me.

This (real) photo by artist Pia Riverola shows a huge bushel of flowers, one of the telltale signs of an AI-generated image—and immediately looks fake to me.

All of this is undeniably weird: After half a year of looking at AI images I’ve somehow accepted their oddities and instead spot the uncanny in the everyday.

A few weeks ago, we had a friend visit from Sweden and got talking about the advent of fakes. “I’m not so worried that people will think an AI image is real,” she said. “But what if people no longer accept a real photo as proof that something happened?” In other words, what if the same reversal I’ve been experiencing becomes commonplace and the real things start looking fake?

It’s a notion echoed by the photographer Boris Eldagsen, who caused a minor scandal in the photo world by winning a photo award with an AI-generated image and then protesting that very fact.1 In the latest issue of Der Spiegel, the photographer is quoted as saying that “In the future, we have to approach any image with the assumption that it isn’t real.”

What’s been bothering me about all this, including in my own writing (and lord knows I’ve been writing about this a lot) is how speculative the take on AI has been: We talk a lot about future scenarios, threats, and fears. But I sometimes feel like the speculation about these possible consequences of AI-generated photography is distracting from what photography demonstrably does to the present. Lately, I’ve been wanting less Sci-Fi and more gritty realism.

That’s why the latest article from photo critic Jörg Colberg resonated with me, in which he analyzes odd photos of Russian warlord Yevgeny Prigozhin that make him look ridiculous, all in the wake of Prigozhin’s attempted coup in Russia. Colberg’s wider point:

[…] there is more to photography and fakeness. It’s good to be aware of photographs being made to look real even though they are not. But it’s also important to be aware of photographs that are real even though they either don’t look real or they look too ridiculous to be real.

A vintage car, sticky windshield full of linden seeds, illuminated from the back.

A vintage car, sticky windshield full of linden seeds, illuminated from the back.

Update: That didn’t take long: The New York Times reports that current coverage of the Israel-Hamas conflict is marred by widespread doubt on social media that the images shared are actually real.

There has, of course, always been the proverbial fog of war, but a mixture or generated-but-credible images along with pictures of a different conflict have helped manufacture increasing doubt:

For now, social media users looking to deceive the public are relying far less on photorealistic A.I. images than on old footage from previous conflicts or disasters, which they falsely portray as the current situation in Gaza, according to Alex Mahadevan, the director of the Poynter media literacy program MediaWise.

“People will believe anything that confirms their beliefs or makes them emotional,” he said. “It doesn’t matter how good it is, or how novel it looks, or anything like that.”

Though it bears mentioning that the jury of the award maintains it was aware the image had been generated and decided to nevertheless award him a price in the “Creative” category, after which Eldagsen very publicly retracted the image, arguing that these types of images shouldn’t qualify. It smacks of an engineered controversy, but that’s beside the point of this article.↩︎